The Full Stack

NSL has everything you need to train a neural network without any external dependencies: tensors, automatic differentiation, optimizers, tokenizers, data loaders, and GPU acceleration.

Step 1: Create Parameters

Grad variables track their gradients automatically.

# A simple 2-layer network for MNIST (784 -> 128 -> 10)

let w1 = grad.variable(tensor.to_list(tensor.random([784, 128])))

let b1 = grad.variable(repeat(0.0, 128))

let w2 = grad.variable(tensor.to_list(tensor.random([128, 10])))

let b2 = grad.variable(repeat(0.0, 10))

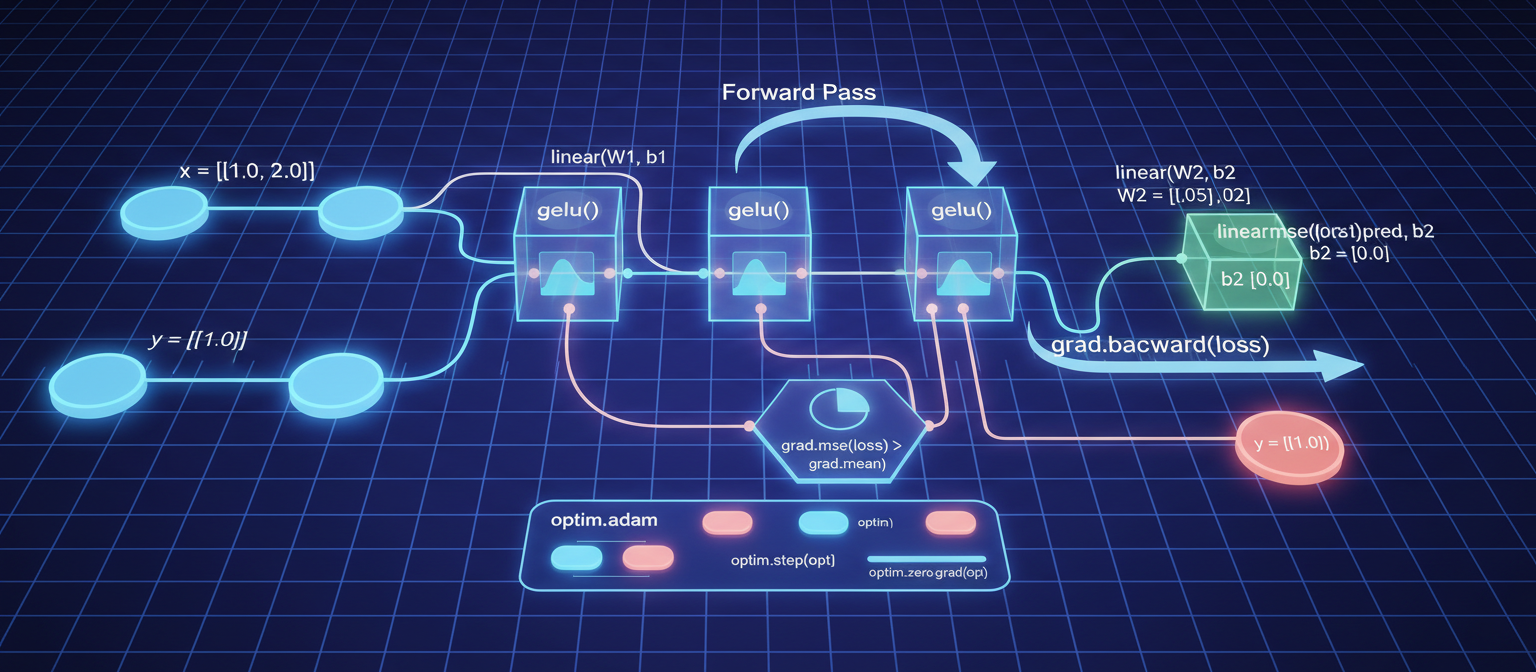

Step 2: Forward Pass

Build computation graphs with tracked operations.

:: forward(x) ->

let h = grad.relu(grad.add(grad.matmul(x, w1), b1))

let out = grad.add(grad.matmul(h, w2), b2)

return out

Available layers: grad.linear(x, weight, bias), grad.embedding(ids, weight), grad.layer_norm(x, gamma, beta), grad.dropout(x, rate).

Activations: grad.relu, grad.gelu, grad.sigmoid, grad.tanh, grad.softmax.

Step 3: Loss and Backward

let logits = forward(x_batch)

let loss = grad.cross_entropy(logits, y_batch)

grad.backward(loss) # Compute all gradients via chain rule

grad.mse(predicted, target) is available for regression tasks.

Step 4: Optimize

let params = [w1, b1, w2, b2]

let opt = optim.adam(params, 0.001)

for each epoch in range(10)

let loader = train.dataloader(train_data, train_labels, 32, true)

let batch_num = 0

while train.has_next(loader)

let batch = train.next_batch(loader)

let logits = forward(batch.data)

let loss = grad.cross_entropy(logits, batch.labels)

grad.backward(loss)

optim.clip_grad_norm(params, 1.0)

optim.step(opt)

optim.zero_grad(opt)

batch_num += 1

print(train.progress(epoch, batch_num, train.num_batches(loader), loss))

Step 5: Learning Rate Scheduling

let total_steps = 10 * train.num_batches(loader)

let step = 0

# Cosine annealing

let lr = train.lr_cosine(0.001, step, total_steps, 0.0001)

optim.set_lr(opt, lr)

# Linear warmup + decay

let lr = train.lr_linear(0.001, step, 100, total_steps)

# Step decay (multiply by 0.1 every 30 steps)

let lr = train.lr_step(0.001, step, 30, 0.1)

Step 6: Save and Load

JSON checkpoints:

train.save_checkpoint("model.json", params, opt, epoch, {accuracy: 0.95})

let ckpt = train.load_checkpoint("model.json")

NCF binary checkpoints (smaller, compressed):

ncf.save("model.ncf", {

w1: grad.tensor(w1), b1: grad.tensor(b1),

w2: grad.tensor(w2), b2: grad.tensor(b2),

epoch: epoch

})

Step 7: Tokenization (for NLP)

let tok = tokenizer.create("bpe")

tokenizer.train(tok, corpus_texts, 8000)

tokenizer.add_special(tok, ["[PAD]", "[UNK]", "[CLS]", "[SEP]"])

let ids = tokenizer.encode(tok, "Hello world")

let text = tokenizer.decode(tok, ids)

let batched = tokenizer.encode_batch(tok, batch_texts, true) # with padding

tokenizer.save(tok, "tokenizer.txt")

GPU Acceleration

Operations automatically use the GPU when tensors are large enough:

tensor.matmul/grad.matmul-- GPU when dimensions >= 64grad.relu,grad.gelu,grad.softmax-- GPU when n >= 4096tensor.batched_matmul-- GPU for multi-head attention

No flags, no device management, no .cuda() calls. It just works.

Peak performance on NVIDIA GPUs with tensor cores: up to 27 TFLOPS (FP16) and 14.97 TFLOPS (TF32). CPU path: AVX-512 SGEMM at 1,648 GFLOPS.

Loading Pretrained Weights

let weights = grad.from_safetensors("model.safetensors")

# Returns dict: name -> variable ID

let embed = weights["model.embed.weight"]